By Leigh Hopwood, CEO of the CCMA

Your go to space for the industry's latest news.

By Leigh Hopwood, CEO of the CCMA

By Steve Morrell, Managing Director at ContactBabel Ltd.

By Brian Manusama, Executive Partner at Actionary

By Michelle Beeson, Senior Analyst at Forrester

Almost overnight, Artificial Intelligence (AI) grabbed the attention of organisations and...

By Fiona Passantino, Founder of Executive Storylines AI is one of the world’s fastest growing...

By Colin Shaw, Founder of Beyond Philosophy and Host of ‘The Intuitive Customer Podcast’

By Sandra Haworth, Marketing Director at Cirrus

2023 presented organisations with a wide array of challenges and opportunities. Now, at the...

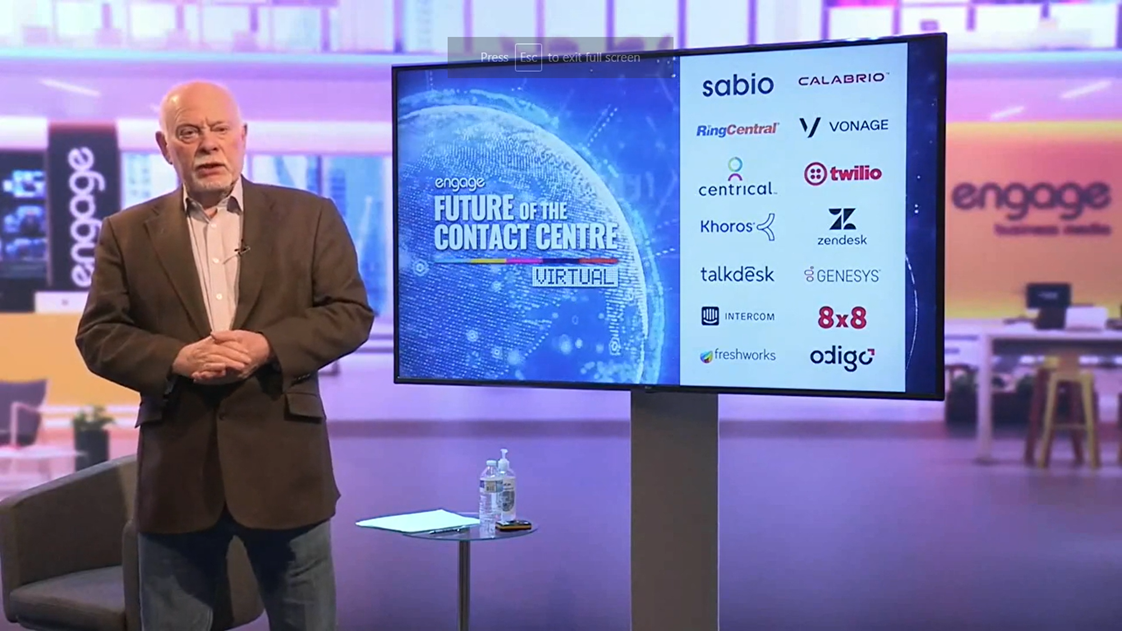

Our first event of 2024, the Future of Customer Contact Conference, took place on the 8th of...

An interview with the founder of Rod Jones Contact Centre Consulting

Customer expectations are evolving at a faster rate than ever before. Knowing this and...

An interview with the CEO and Founder of Customer Experience Consultancy Ltd.

An interview with Amanda Whiteside, Head of GTM Revenue Enablement at Freshworks

An interview with the CEO of the CCMA (Call Centre Management Association)

An interview with the CEO of The Institute of Customer Service

In our recent Engage Talks studio broadcast, industry experts Stuart Dorman and James Cockbill...

An interview with the winners of the Best Use of Customer Insight award

An interview with the winner of the Best Customer Contact Transformation award

2023 was a year of transformation. Organisations and consumers alike grappled with the consequences...

An interview with the winner of two categories in the 2023 Engage Awards

An interview with the winner of the Best Use of Voice of the Customer award

An interview with the author of CustomHER Experience

By Oliwia Berdak, VP research director, Forrester

By Blackhawk Network (BHN)

Engage Business Media worked in partnership with Ipsos to deliver a unique cross-industry study,...

“I’m looking to network, but mostly I’m looking to learn.”

With 2023 soon coming to an end, we are reflecting on the conferences we held, the ideas presented...

Find out how the 2023 winners impressed our judges this year.

A pre-event interview with the CEO of The Institute of Customer Service

A pre-event interview with the Customer Experience Manager of Ella’s Kitchen

A pre-event interview with BNP Paribas Real Estate's Head of Experience

By Simon Kriss, Chief Innovation Officer for the CX Innovation Institute, author of “The AI...

By Charlie Adams, Head of Customer Excellence at Castles Technology

Celebrity headliners, CX experts, and industry-leading brands will explore human-centricity’s role...

A pre-event interview with the Head of Customer Information at Arriva Rail London

By Rod Jones, CX Industry Specialist

By Steven van Belleghem, keynote speaker and author

By Simon Kriss, Chief Innovation Officer for the CX Innovation Institute

An interview with Kramp’s Customer-Centric Culture Manager

By Rod Jones, CX industry specialist

To mark National Customer Service Week this year (October 2-6), we are looking back at the work we...

By Colin Shaw, Founder and CEO of Beyond Philosophy LLC

By Charlie Adams, Head of Customer Excellence at Castles Technology

The 13th edition of Europe’s largest customer engagement event, the Customer Engagement Summit, is...

We are delighted to announce the finalists of our 2023 Engage Awards – the UK’s only Awards...

By Ian Gibbs, Director of Insight at The Data & Marketing Association (DMA UK)

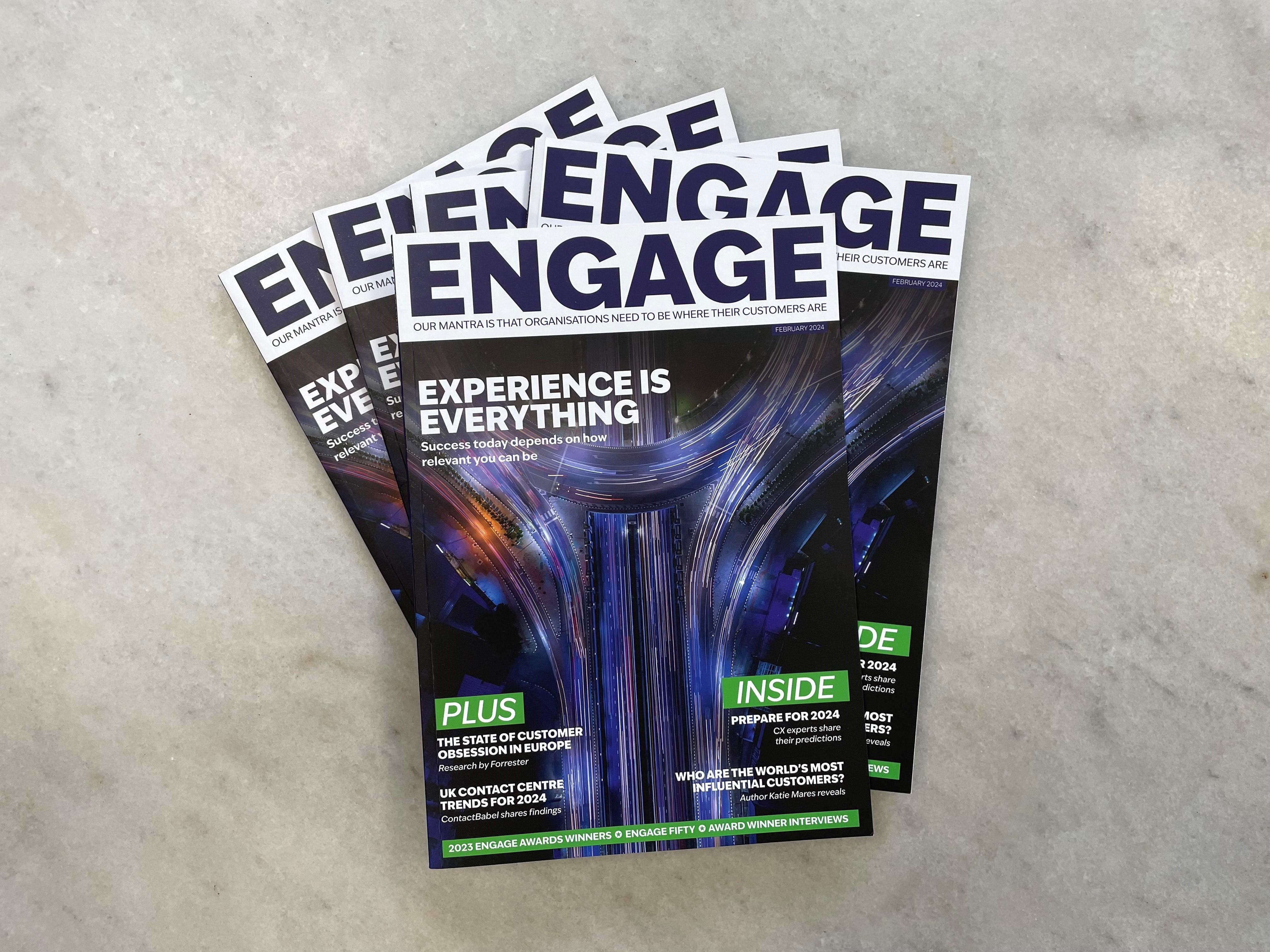

Our mantra for over a decade since 2010 has been that organisations need to be where their...

An interview with CX enthusiast and keynote speaker, Steven van Belleghem

By Simon Kriss, Chief Innovation Officer for the CX Innovation Institute

An interview with the CEO of The Institute of Customer Service (ICS)

By Marco Ndrecaj, Director of Contact Centre Services at SSCL

An interview with the Founder of BrandLove

By Rod Jones, CX industry specialist

By Simon Kriss, Author of The AI Empowered Customer Experience

By Zsuzsa Kecsmar, Co-founder and Chief Strategy Officer of enterprise loyalty technology provider,...

Judging will commence on August 28th

More and more brands are offering unique experiences for their customers. As a result, customer...

An interview with the Founder and CEO of Solutioneers

To help marketers better understand their customers’ preferences using data insights, Director of...

By Angel Maldonado, CEO of Empathy.co

By Rod Jones, CX industry specialist

We are delighted to announce that the Engage Awards entry deadline has been extended to August 14th...

An interview with OneWeb’s Chief of Staff & Head of PMO

By Gerry Brown, Chief Customer Rescue Officer, Customer Lifeguard

An interview with the Head of Worldwide Customer Optimisation and Enablement at Amazon Web Services

By Stephen Yap, Research Director, CCMA UK

By Taavi Kotka, CEO and Founder of Koos

Did you know that the American rock band Van Halen had a 'no brown M&Ms' clause in their concert...

An interview with Virgin Media O2’s Director of Sales, Retention & Retail Stores

In November 2022, we awarded 10 organisations for their outstanding and exemplary achievements in...

A post-event interview with NatWest’s Integration and Partnerships Lead (Youth and Families)

Earlier this year, we held our annual Customer Engagement Transformation Conference, where 40...

A post-event interview with the Head of Service at MANUAL

Last week, we held our annual Customer Engagement Transformation Conference, welcoming over 500 CX...

By Sergio Martin, Global Customer Support Manager (Resolutions) at IKEA

By Taavi Kotka, CEO and Founder of Koos

The 2023 Customer Engagement Transformation Conference took place on the 14th of June – and it was...

By Gerry Brown, Chief Customer Rescue Officer, Customer Lifeguard

By Dave D’Arcy, Founder of Laughing Leadership

By Karl Lillrud, a renowned expert in the field of Artificial Intelligence

A pre-event interview with the Go-Ahead Group’s Customer and Commercial Director

A pre-event interview with Beauty Pie’s Senior Member Happiness Manager

A pre-event interview with Utilita’s Chief Customer Contact Officer

A pre-event interview with Jaguar Land Rover’s Client Care Director (UK)

A pre-event interview with Sky’s Head of Digital: CX & Tech Futures

A pre-event interview with a much-anticipated speaker

In less than two weeks, you will have the chance to hear from representatives of world-renowned...

The Engage Customer office is buzzing with excitement now that there are only two weeks to go until...

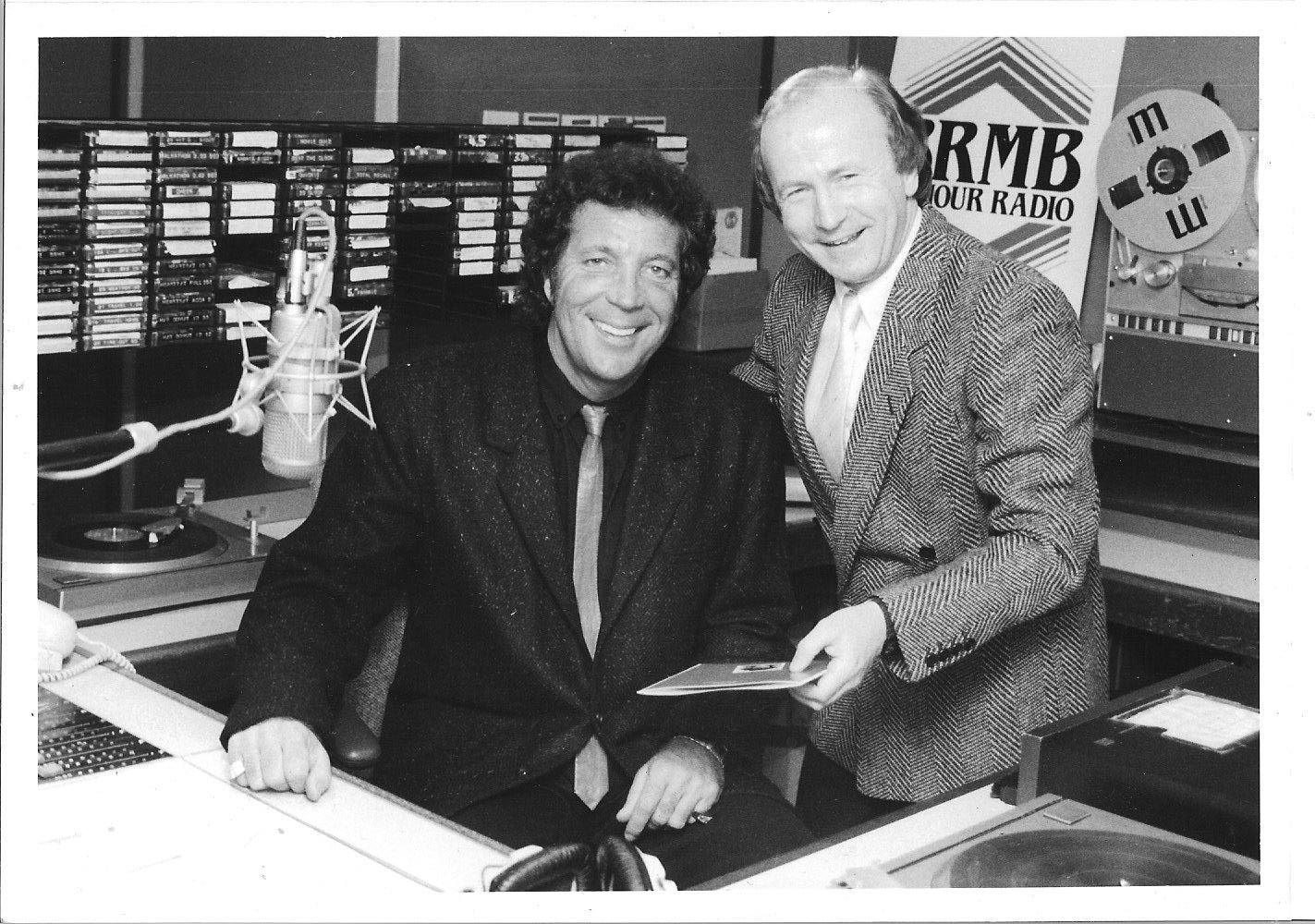

Mike Owen with Tom Jones following an on-air interview on his ‘Boy From Nowhere’ release in 1987

A few weeks ago, we launched our Meet the Judge campaign to introduce the industry experts who will...

Today, organisations are faced with multiple challenges when trying to navigate the current CX...

By Mike Kiersey, Head of the EMEA Technology Organisation, Boomi

Download to watch on-demand or register now to secure your spot

In just over a month, Engage Customer will hold its 2023 Customer Engagement Transformation...

On June 14th, Engage Customer is holding the 2023 Customer Engagement Transformation Conference. At...

Earlier this year, we held our 2023 Future of Customer Contact Conference, where attendees had the...

In January, we announced that the 2023 Engage Awards were officially open for entries. Since then,...

In the last few years, businesses have had to take a step back and re-examine the way they operate....

This February, we had the pleasure of hearing from Michelle Ansell at our Future of Customer...

This week we had the pleasure of chatting with Ekaterina Mamonova, Global Marketing and Customer...

The eye of a needle is a term used by Jesus as recorded in the synoptic gospels: “I tell you the...

An interview with Sky’s CX Manager, Dale Danville

Earlier this year, we held the 2023 Future of Customer Contact Conference at The Brewery in London....

To say that the past few years have been difficult for both organisations and individuals would be...

Do companies set out to win when they embark on a new project or change programme? Probably not,...

By Leigh Hopwood, CEO of the Contact Centre Management Association (CCMA)

By Jennifer Olson, Executive Vice President of Customer Success at Align Technology

By James Hunnybourne, CRO at Ultima

To mark International Women’s Day this year, I have spent hours researching and reading the stories...

An interview with Moneypenny’s Group CEO

Sunseeker International won the Best Use of Innovation in Customer Engagement Award at our 2022...

An interview with Chandni Bhatt – Senior Manager, Member Happiness at Beauty Pie

An interview with Sky’s Service Strategy Manager

An interview with the CEO of the Conversation Design Institute. Earlier this month, we held our...

An interview with Head of Services at Naked Wines

Last week, we held our first event of the year: the Future of Customer Contact Conference. Not only...

Customer loyalty may not be at the front of your mind when you’re running marketing campaigns to...

With the rapid rise in inflation and the cost-of-living crisis, it is not surprising that people...

The world-leading provider of tech-enabled language, content, and intellectual property services...

It was great running a 90-minute breakout workshop at the Future of the Contact Centre Summit in...

Having automated, proactive conversations with your customers, driven by AI, is only possible if...

For AI to cement its place within consumers’ lives over the next 10 years, companies need to employ...

The private bank and wealth manager Coutts earned the Best Use of Technology in Customer Engagement...

ContactBabel, the leading research and analysis firm for the contact centre and CX industry, has...

This month’s Future of Customer Contact Conference will be headlined by Andrew Davis, one of...

An Interview with Nicholas Brice, CEO of Soul Corporations and Sarah Hood, Global Head of...

With just two weeks to go until our upcoming Future of Customer Contact conference, we would like...

We are excited to announce that there are only two weeks left until our first event of the year:...

During the pandemic, many organisations turned to the digital space as the world was made to stay...

We are thrilled to announce that entries for the 2023 Engage Awards are now open!

Emails and phone calls are proven ways to run a business but in this fast-paced technological...

In just three weeks, Engage Business Media will hold its first event of the year: the Future of...

The 12th edition of the Customer Engagement Summit took place at the Westminster Park Plaza in...

As 2022 drew to a close, most of us craved a period of calmness, both economically and emotionally....

We are proud to announce that Engage Business Media has raised £2,327.50 for Cancer Research. This...

Experian, Sky, and Ford reveal how businesses can adapt to changes in customer behaviour.

Marketers understand just how important it is to deliver a personalised and relevant customer...

Earlier this month, Engage Business Media held the annual International Engage Awards at the...

The Guardian explains how it halved churn by perfecting the fundamental basics of its customer...

It takes a lot for a project to be a hit with all the judges and make the final of the Engage...

On the 15 th of November, more than 800 people walked through the doors of the Westminster Park...

Earlier this week I attended the 2022 Customer Engagement Summit, hosted by the team here at Engage...

On 15th November 2022, Engage Business Media were thrilled to welcome 400 attendees to celebrate...

Are you in SHAPE to lead or influence the modern digital-hybrid organisation?

Chatbots can help you construct a seamless customer experience, saving you time and money. Their...

Research by the University of California looked into how some of our digital practices are...

We’re living in a transformational period when it comes to customer experience (CX). With the voice...

In the lead up to the 2022 Customer Engagement Summit we spoke to Abdul Kahled, Head of Digital...

In the lead up to the 2022 Customer Engagement Summit we spoke to William Agnew, Experience Lead -...

Customer experience insights give you an overview of how consumers currently perceive your...

Moving your data to the cloud provides extra security, and with everyone working from a centralised...

Ahead of our flag ship event, the Customer Engagement Summit we spoke with Cheryl Graham, Service...

Belron studied Chinese wisdom to help it build a new omnichannel engagement framework to the joyful...

How customers view your business depends on the experience you offer during their interactions with...

Customer experience is constantly changing. With the emergence of new technologies and processes,...

By Yasmin Peiris, Director, Customer Success, Mapp Digital Global retail sales will see reduced...

Need a little inspiration for your new CX strategy? We have found an insightful piece by SoftClouds...

Delivering a positive customer experience is at the heart of what we do at the Financial Services...

Don’t be scared to cut your losses if it’s blatantly obvious your most precious idea is heading...

I’ve had the pleasure of living and breathing issues of customer experience (CX) employee...

We talk a lot about how to nurture the customer through intelligent marketing processes, brand...

With a boom in demand for its customer service team, Dreams needed technology that would empower...

The operator said the move was part of ‘fundamental changes’ to its business strategy as it bids to...

American Express, the financial services brand, describes itself as “a global services company that...

Regular Engage Customer Contributor Mark Hillary has just published a new book titled ‘Don’t Fear...

Customer service teams are set to undergo major digital transformation and growth over the next...

The trusty phone line has been tested since COVID-19 disrupted just about all aspects of life. The...

With consumer behaviour changing rapidly over recent years, customer engagement is now one of the...

The ECCCSA Award finalists for 2021 were just announced a few days ago. The awards cover a number...

With this year’s Knowledge Management Conference just a few weeks away, we’ve decided to sit down...

In this piece, Julien Rio, Marketing Director at RingCentral Engage Digital, talks through five...

Leading online fashion retailer ASOS has topped a 2019 Retail Benchmark Report analysing online...

According to Salesforce research, 80% of consumers now believe the experience a company provides is...

Sign-up for the Engage Customer newsletter and build a better customer experience.